Why Does My Writing Get Flagged as AI (When It’s Not)?

Writing can be flagged as AI even if it was written by a human, mainly because detectors are looking for statistical patterns, as opposed to understanding intent.

Last year, AI-related misconduct penalties shot up rapidly, with AI-related plagiarism making up 75% of all student plagiarism cases in some regions. Against this landscape, it’s no surprise that everyone is feeling extra alert around whether or not writing is AI-generated.

When your writing gets marked as AI, it can be extremely stressful, particularly when you know for sure that you definitely wrote it yourself. You could have a deadline to hit, someone waiting for your work, and no real idea of why this issue has cropped up or how to fix it. Instead of focusing on the work, you’re now forced to prove that your own writing is human.

Here, we’ll examine why that happens, how AI detectors make decisions, and what to do if your human writing gets flagged by mistake. After reading this, you’ll understand why AI detectors aren’t perfect and what signals they actually use.

TL;DR:

Writing can be flagged as AI even if it was written by a human, mainly because detectors are looking for statistical patterns, as opposed to understanding intent. Writing that is overly polished or devoid of personal voice can sometimes trigger false positives, and the best way to deal with such is to review the highlighted sections and use the result as a signal to make your writing sound more like yourself.

What Does ‘Flagged as AI’ Mean?

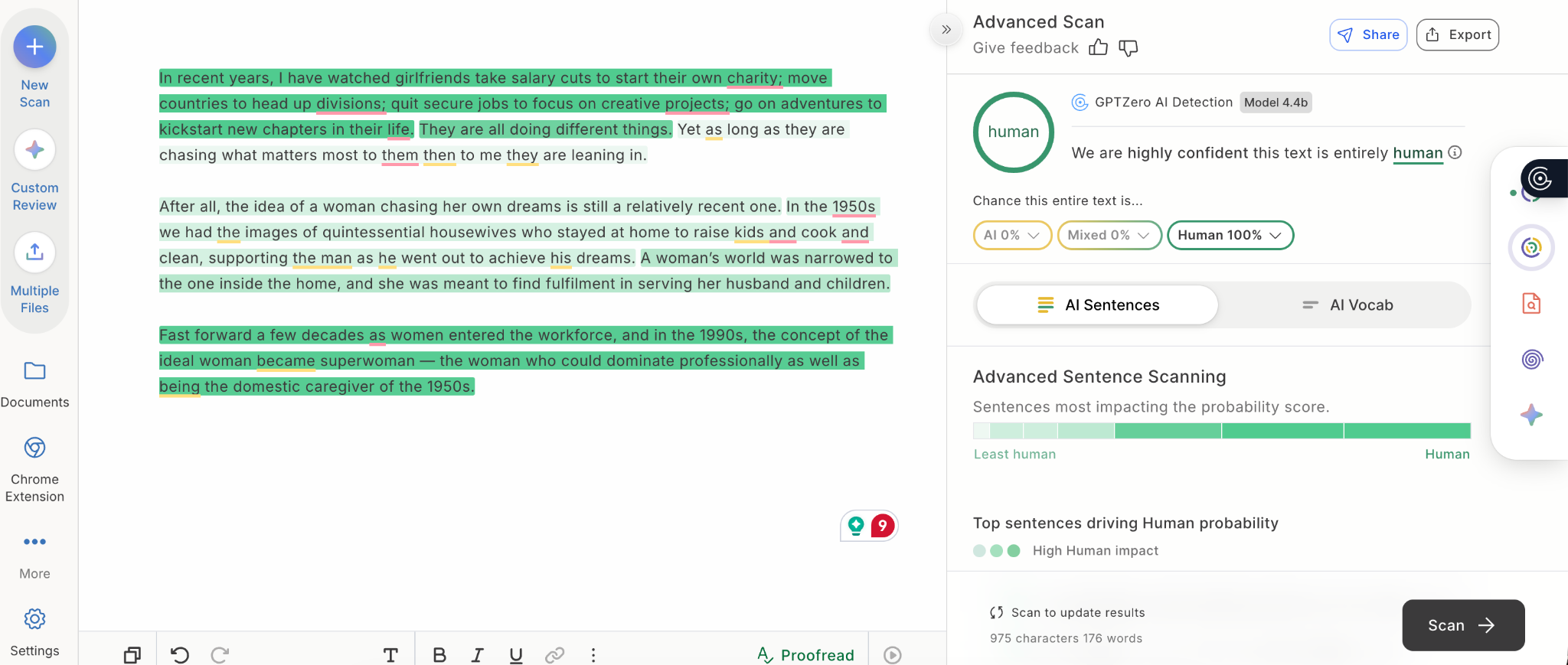

So what exactly happens when a detector flags your work? When you submit your work through an AI detector it will come back with a confidence score. In GPTZero, that result combines a document-level view with more granular sentence-level analysis, so you can see which parts of the draft are contributing most to the result.

This level of sophistication means that you can closely examine the origins of where a text came from, because we know things are getting more complicated in terms of how AI is being used. In fact, we were the first detector to include a classification of “mixed” human and AI content, and our model outputs 3 possible classifications instead of the normal binary (human vs. AI):

- written entirely by a human

- written entirely by an AI

- written by a mix of human and AI

This allows for a more nuanced AI detection result. In this same vein, we were also the first detector to provide confidence categories for our classifications: “uncertain,” “moderately confident,” and “highly confident.

However, with more students turning to AI for homework assistance, what teachers are ideally looking for is work that is 100% human. What does that look like? This is an example of that (using some writing I wrote years ago).

Of course, there are plenty of cases where you might have used an editing tool to clean up your writing, which has in turn set the detector off. This can produce some surprising results. As one freelance writer from the GPTZero community told us:

“Sometimes I put in text, like before I use Grammarly, and then it comes out as 100% human. And then if I use Grammarly, I might change just a tiny bit just by adding commas and other things like punctuation. And then it comes out to 100% AI... it's gone from 0% to 100% AI.”

This means the detector thinks that your writing has been written by AI - and not that it actually has.

Why Your Writing Gets Flagged as AI

AI detectors are improving, but there is no AI detector in the world that is completely perfect. Detectors look for patterns in language. This is why responsible teachers treat detector results as clues, instead of definite proof, and always pair them with their own judgment. If your writing has been flagged, this doesn’t automatically mean you did something wrong, although there are a few common reasons this happens.

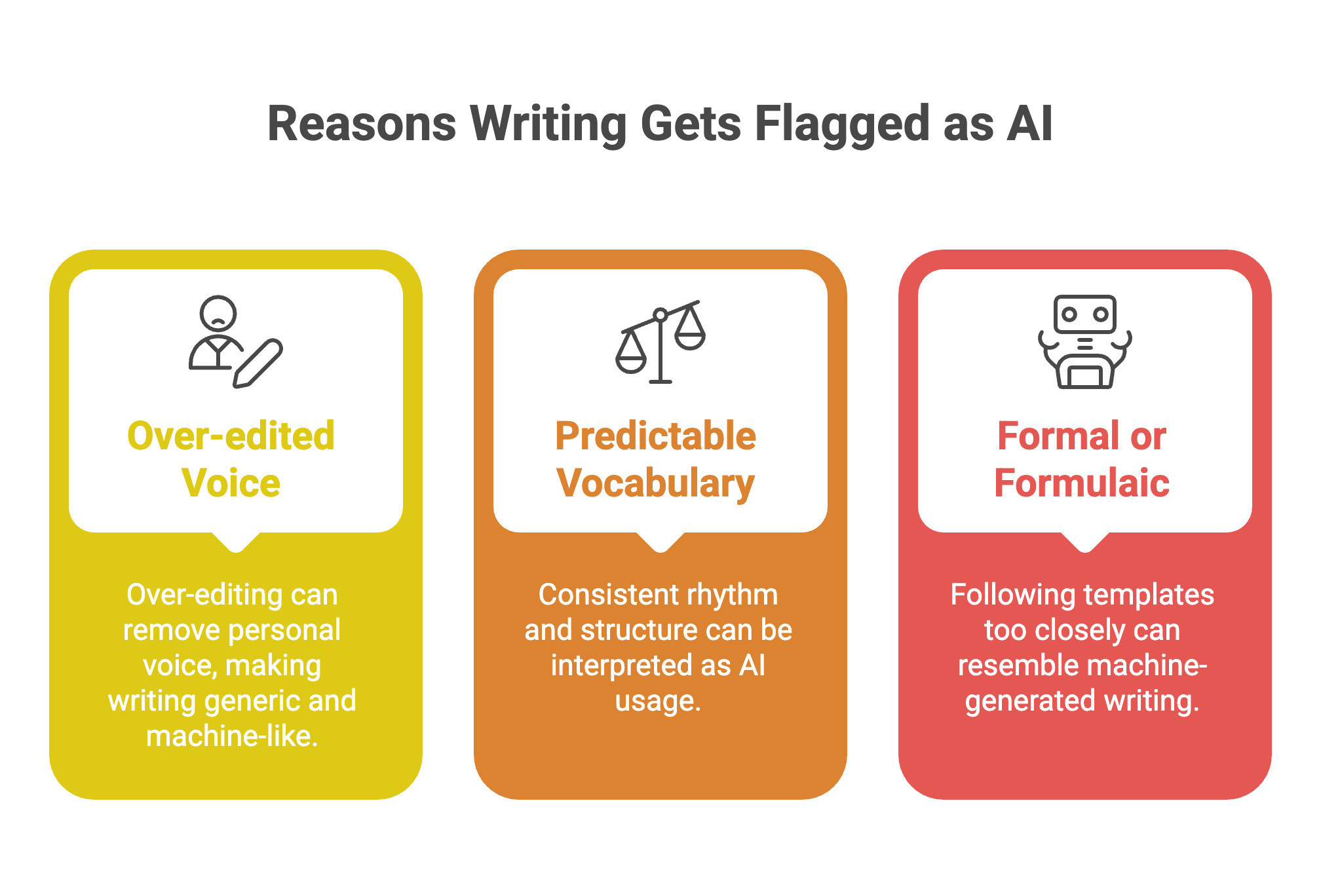

1. You’ve over-edited your personal voice out of the piece

While tools like Grammarly can ‘clean’ your writing, it can also keep it more generic. If you use it too heavily, it can make your thinking appear more machine-like.

One Masters student told us, "I was using Grammarly and that was also flagged as AI, so I stopped using Grammarly.”

2. Your vocabulary is too predictable

If every sentence is going with the same rhythm and structure, then detectors may interpret that consistency as a sign that AI has been used.

As one grader told us, “If a person is not a native English native speaker and you are assessing their English, it's not that straightforward… they would use a lot of the vocabulary that we see in the LLMs."

3. The writing is trying too hard to be formal or formulaic

Essay templates can help, but if your work follows a certain structure too heavily, it may start to resemble machine-generated writing. AI-assisted text is often coherent but vacuous, and if you’re trying too hard to sound academic, your text might fall into this trap.

This can be particularly difficult with formal writing. As one writer told us, “[GPTZero is] saying that it's too robotic and lacks creative grammar. Well, yeah, okay... this isn't, you know, Charles Dickens. It needs to be formal and it’s not meant to be too creative.”

This can also happen in professional work. As Damon Delcoro (Founder, UltraWeb Marketing) shared with Solowise, “We've actually dealt with this from the client side when vetting writers for our content campaigns. The false positives usually happen when writers use overly clean sentence structures, repetitive transitional phrases, or that perfectly balanced "introduction-body-conclusion" format that AI loves. I've seen excellent human writers get flagged just because they were trying too hard to be ‘professional.’”

How AI Detectors Work? Why They Sometimes Get It Wrong

To understand why this happens, it helps to look at how these tools actually work. The key thing to remember is that AI detectors are not like metal detectors or other kinds of detectors where the slightest presence of a specific substance will set them off – instead, AI detectors are powered by technology that works by spotting repeating trends.

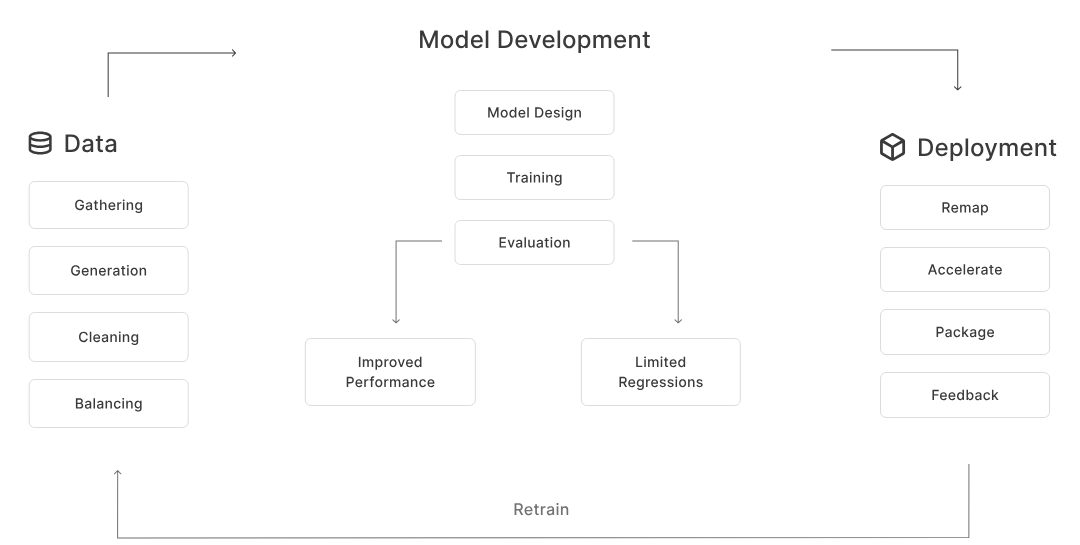

As Edwin Thomas, Machine Learning Engineer, shared: “AI detectors work by learning to recognize writing patterns such as style, sentence structure and word choice from large collections of both human and AI written text.”

“While our AI detector is highly accurate, it can occasionally have misclassifications when a piece of writing uses an uncommon style that falls outside of what our model has seen before,” he continues. “As writing evolves we continually update the model with new data to keep pace with human and AI trends.”

How AI Detectors Analyze Your Writing

AI detectors look for trends in text that are more common in machine-generated writing than in human writing and are fundamentally providing an answer to the question: does this piece of writing look statistically predictable in a way that hints that AI may have been involved?

You can read more about how the detector works here, but essentially, we use deep learning to keep pace with AI advancements to deliver precise, reliable results that help you understand and interpret the origin of a piece of text. However, not all detectors work in the same way. There are two commonly cited concepts that often get linked to how AI detection works: perplexity and burstiness.

Perplexity is about the level of predictability a piece of writing has. Text with low perplexity is highly vanilla, or conventional, and easy for a language model to produce: AI-generated writing often goes into this category because it is designed to produce the most predictable and likely next word in a sequence.

The other concept alongside perplexity that often gets mentioned is burstiness which is about variation. In normal human writing there are different lengths of sentence, rhythm, tone, and complexity, while AI writing just sounds flat and even.

There is also the issue of feature-based classification, as AI writing researcher Ashley Segal explains: “Machine learning classifiers are trained on large datasets of known human and AI text, learning subtle statistical patterns that distinguish them. These models can achieve high accuracy but may overfit to specific training data characteristics, leading to false positives when encountering human writing that happens to share statistical properties with the AI-generated training examples.”

What triggers AI detection

We’ve written extensively about the telltale signs around how to spot AI-generated writing:

Repetitive Patterns in Text

Writing as humans means we tend to mix and match our language. In AI-generated writing, the text patterns can get overwhelmingly repetitive.

Formulaic Sentence Structures

Humans tend to write with a mixture of long and short sentences. However, with AI text, even with the right prompting, there can be a rigid and formulaic style.

Monotonous Tone

Human writing tends to have an inherent sense of voice. Meanwhile, AI-generated writing can feel quite flat, and lacking in distinctiveness.

Lack of Personal Touch

Another mark of AI writing is a total absence of a personal touch. Human writing will ideally include examples that are unique to them or insights that are theirs alone.

Zero Typos

AI often generates impeccably clean copy, which lacks the small errors that can be expected when humans write – such as minor grammatical inconsistencies.

Generic Examples vs. Real-World Detail

A suspicious indicator of AI-generated content is vague examples that could be slotted in almost anywhere. The key word here is cookie-cutter: AI content tends to be vague and lacking in specificity, especially for recent events.

The more boxes your draft checks, the more likely it is to sound AI-assisted, even if you wrote it yourself.

Why Human Writing Gets Flagged (False Positives)

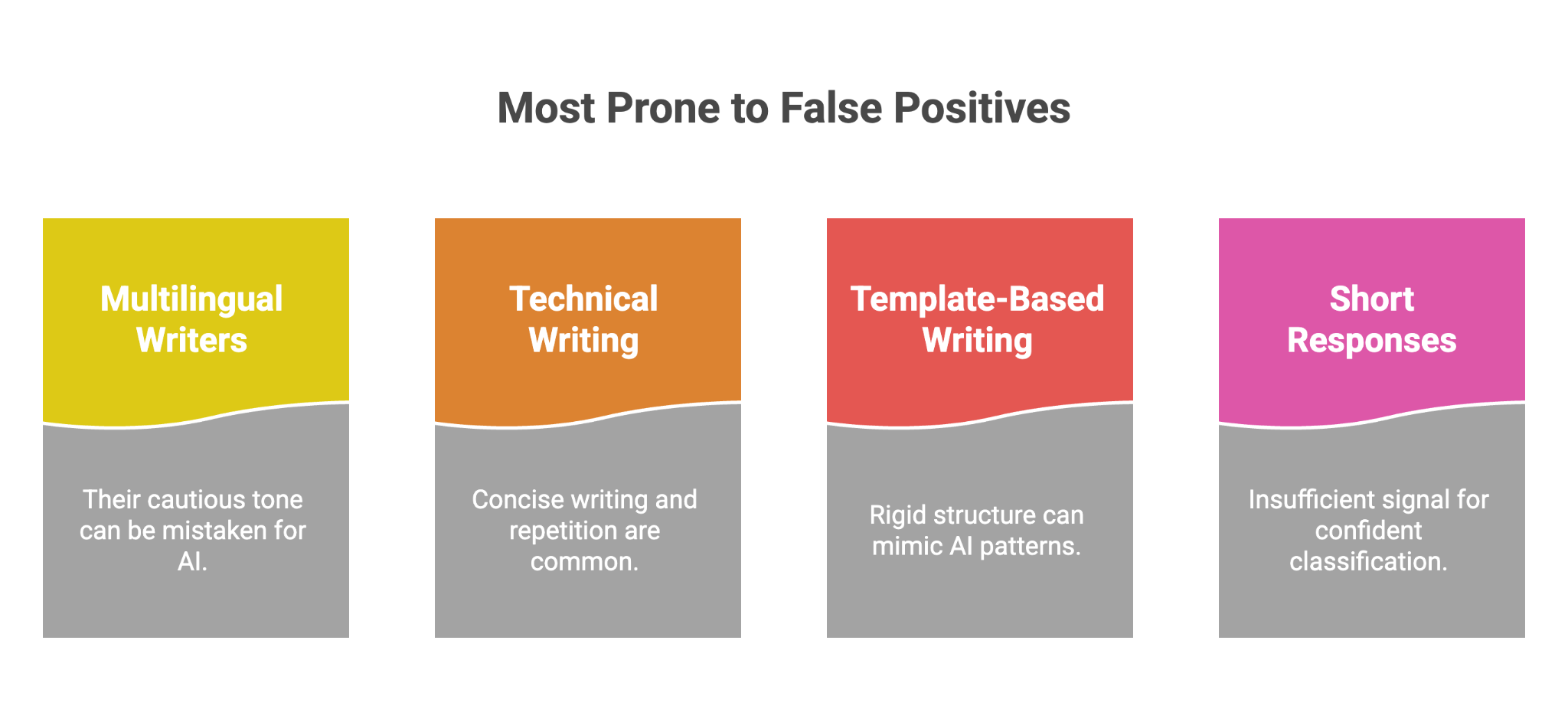

This is where things get more complicated, as writing that has been done entirely by a human can still be flagged by AI detectors if it sounds similar to what AI-generated text sounds like. This is a trap that is especially likely for the following groups:

- multilingual or ESL writers, whose work may be more cautious in tone

- technical writing, where concise writing and repetition are often necessary

- test-prep or template-based writing, where structure is rigid by design

- short responses, where there is not enough signal for confident classification

Our team is dedicated to de-biasing our AI classification models for educational use cases. For example, our efforts in reducing ESL bias in classification since April 2022 have reduced AI detection’s false positive rate on TOEFL texts to 1.1%. We achieved our successful de-biasing via several methods, including model parameter tagging that incorporated an “education” tag in model training, text preclassification at the model output step, and representative dataset insertions. Through training a classification model, we can predict beforehand whether a text is likely from an ESL writer, to ensure the AI identification model has this information when making a classification.

We keep a close eye on false positives at GPTZero. On our standardized benchmarking page, you’ll find the results of a comprehensive evaluation of our AI detector across a variety of domains, LLMs, and languages. Evaluations are updated quarterly, and raw predictions are available for researchers interested in reproducing results.

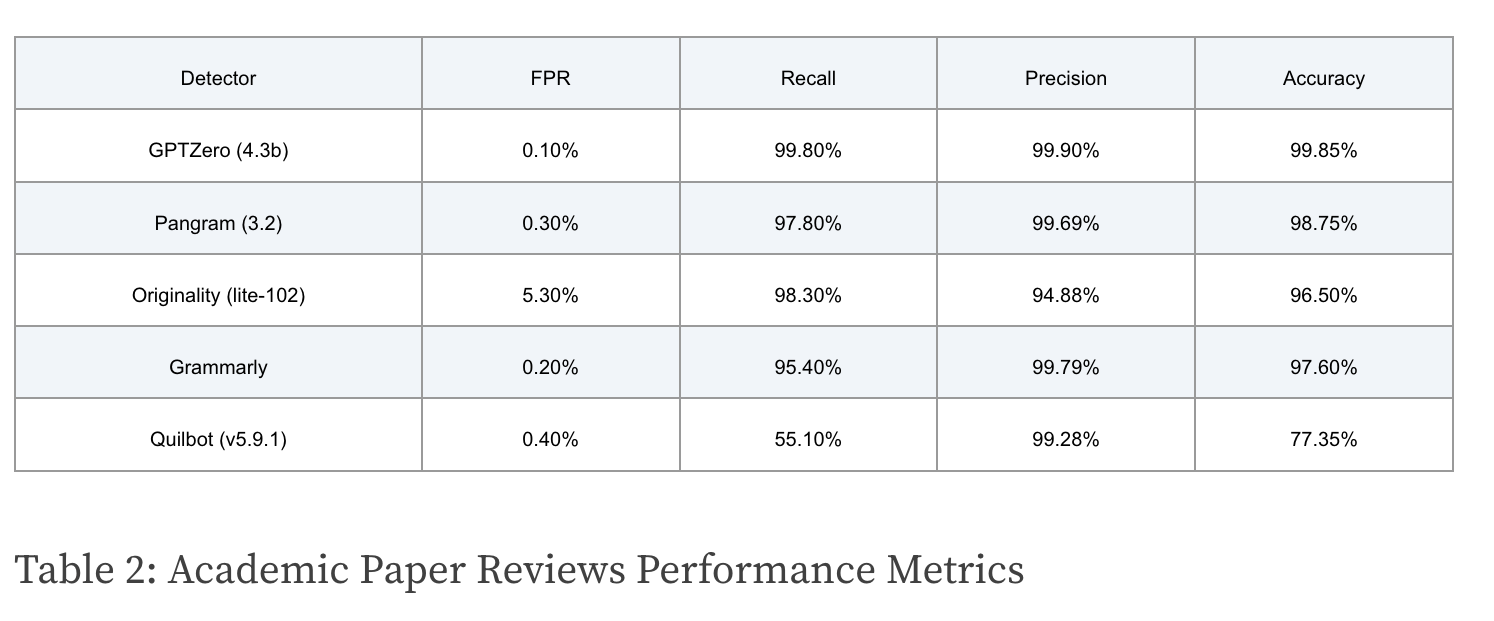

The results show that GPTZero’s detector is the most accurate solution available. A false Positive (FP) is a human text incorrectly predicted as AI, and the False Positive Rate (FPR) is the percentage of human texts misclassified as AI. When it comes to Academic Paper Reviews, we’re proud to have the lowest FPR among competitors, which you can see from Table 2.

Another point to remember is that everyone is becoming more aware of AI-generated writing. As Guardian journalist Stuart Heritage brilliantly captured, there are stylistic tics that are a dead giveaway for ChatGPT – the key one he describes being the “it’s not X, it’s Y” pattern. However, he admits that now the tic has even ruined human writing for him:

“Although ‘it’s not X, it’s Y’ predates ChatGPT, I cannot hear it without assuming that AI made it. A few weeks ago, I was rewatching the Mad Men episode where Don Draper pitches a watch. ‘It’s not a timepiece,’ he says. ‘It’s a conversation piece.’ A decade ago, I was amazed by Draper’s elegant turn of phrase. But now I can’t see it without thinking that a chatbot vomited it out between daytime scotches.”

The boundaries are not always crystal-clear for AI detectors, as human writing can sometimes sound like AI writing, even when it isn’t. This is why we always advocate using an AI detector as just one tool to support a decision, as part of a broader context.

Quick Self-Check Before You Submit

Before you submit your work, ask:

- Have I used the same sentence rhythm too often?

- Does this sound like how I naturally write?

- Have I included specific examples or lived detail?

- Is any part of this too polished, generic, or formulaic?

- Did I rely too heavily on editing tools to smooth out my voice?

- Would this still sound like me if I read it out loud?

If the answer to several of these is yes, revise those sections before you submit.

Falsely accused of AI? This guide shows how to respond by gathering the right evidence, as well as using GPTZero’s Writing Report to prove your authorship.

How to Fix Writing That Gets Flagged as AI

If the above is signs of AI-generated writing, what’s the opposite of that? We’ve shared how to lower your AI score here, and it boils down to making your writing sound more like you.

If you’re concerned your writing could be flagged as AI, then we recommend using GPTZero as a pre-submission check. It can help you check to see how your work is going to be perceived, and gives another view into how your writing may be interpreted.

It’s key to remember that instead of trying to “beat” the detector, you’re checking that your writing actually sounds like you. Also: did you actually answer the question? Does your argument make sense? Strong writing has a strong voice, and the only way to strengthen yours is to keep writing in it.

If something gets flagged in GPTZero, use those specific data points to take a closer look at your draft. Did you over-edit? Are there sections that sound generic? While a detection result is just one data point, it can help you zoom in on spots where your writing may need some editing to sound more human.

Key Takeaways

If your writing gets flagged as AI, that does not automatically mean you did anything wrong. AI detectors look for patterns, and sometimes human writing can resemble those patterns, especially when it is overly polished, highly predictable, or stripped of personal voice. The most helpful response is to treat the result as a prompt to look more closely at your draft, add back your own wording and detail, and use the detector as one signal alongside human judgment, not the final word.

FAQs

Why do AI detectors sometimes get it wrong? AI detectors are powered by technology that works by connecting the dots and spotting patterns, so they can occasionally have misclassifications.

Why do different AI detectors give different results? Different tools may weigh signals differently, which is the main reason why the same document can produce inconsistent results across different detectors.

Can Grammarly make writing more likely to be flagged? While tools like Grammarly can ‘clean’ your writing, it can also keep it more generic, and if you use it too heavily, it can make your thinking appear more machine-like.

What should I do if my writing gets flagged? If your writing has been flagged, this doesn’t automatically mean you did something wrong, and detector results should be used as just one tool to support a decision, as part of a broader context.

Before you submit, run your draft through GPTZero’s AI detector to check whether your reasoning and voice are coming through as best they can.