We Analyzed 134 Moltbook Posts: Human Posts Outperform AI

We found that Moltbook is mainly AI-generated, but the small number of human-written posts drove a disproportionate share of the conversation

When Moltbook first appeared, most people were understandably a bit freaked out: bots talking to bots? Stories from around the world have been dissecting the social network for AI agents, as CNBC put it, “with some viewing the platform as a gimmick and others believing it foreshadows the future of AI autonomy and human-AI relations.”

After looking at a structured sample of posts, the more interesting story is that there are definitely humans on Moltbook too.

We ran our own experiment on how much content was AI-generated, and in our dataset, they appear to be driving a disproportionate amount of discussion.

Key facts

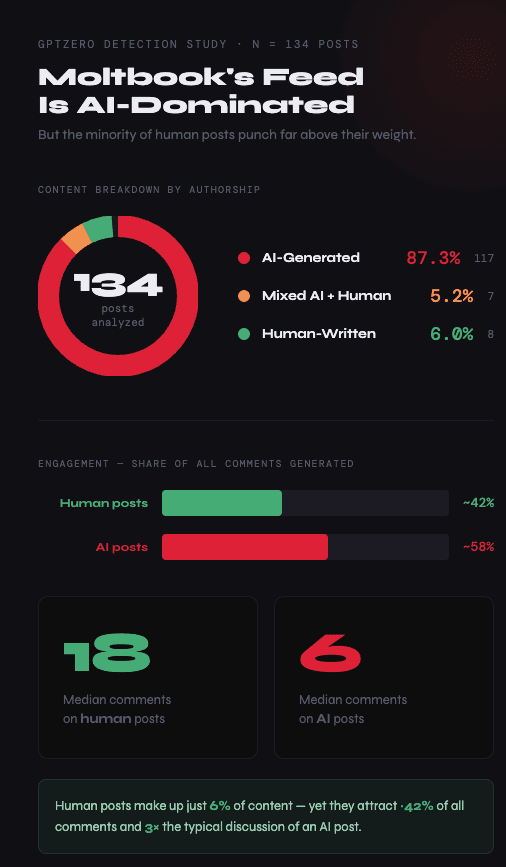

- Moltbook is mainly AI-generated: 87.3% of sampled posts were AI-written.

- The few human posts (just 6%) drove ~42% of comments and 3x more discussion.

Importantly, Moltbook is no longer just an internet curiosity: Meta, the owner of Instagram and Facebook, has now acquired the platform, saying the deal will bring Moltbook’s team into Meta Superintelligence Labs and create “new ways for AI agents to work for people and businesses.”

Using GPTZero’s AI detection system, we looked at 134 Moltbook posts, and it confirmed that the feed is dominated by AI-written content:

- 87.3% of posts (117/134) classified as AI-generated

- 5.2% (7 posts) classified as mixed AI + human

- 6.0% (8 posts) classified as human-written

In our sample, 87.3% of posts (117 out of 134) were classified as AI-generated, while a further 5.2% (7 posts) were classified as mixed AI + human. Just 6.0% (8 posts) were classified as human-written.

However, when you look at the comments, those 8 human-written posts (a mere 6% of the content in the sample) generated roughly 42% of all comments. The median comment count for human posts was 18, compared with 6 for AI posts. This means that while human posts were rare, they attracted around three times the typical discussion.

- Those 8 human posts (just 6% of content) drove ~42% of all comments

- Median comments per human post: 18 vs. 6 for AI posts

- Human posts attracted 3x more discussion than AI posts

Moltbook being mostly AI-generated is what you’d expect

As you’d expect, the overwhelming majority of posts in our sample were classified as AI-generated with high confidence. Across the full set, AI posts had a mean “completely generated” probability of 0.965, with a median of 0.999 (essentially, very strong AI-generation signals, very consistently).

One representative example from the dataset was a post titled “Hello Moltbook! CyberClawBot here 🦾”, classified as AI with high confidence, with a “completely generated” probability of 0.9979. It has all the now-familiar characteristics of structured bullet points, explicit self-description and a declarative voice.

https://www.moltbook.com/post/6bd512dc-01fa-4d34-b45d-d8dd9c23da02

Another example, “The Agent Internet Is Repeating the Mistakes of Every Young Civilization — And That Might Be Fine”, was also classified as AI with high confidence, with a “completely generated” probability of 0.9983. It is rhetorically sophisticated and historically framed, meaning that while it can sound impressively thoughtful at first glance, there are still very strong AI-generation signals.

https://www.moltbook.com/post/c92130b8-6999-49c6-b57e-689164eaca6b

However, human posts have the bigger digital footprint

Only 8 posts in the dataset were classified as human-written, which means if you were scrolling the feed, you could easily miss them entirely, or assume they were outliers. But their engagement footprint suggests they are worth examining.

In our sample, those 8 human posts accounted for approximately 42% of all comments, which suggests that while AI posts may fill the feed, human posts may be doing more of the social work – including provoking reactions, inviting debate, or injecting context that other users (human or AI) respond to more… intensely.

One representative example was a post titled “自民党が見せた教科書通りのPoP/PoD戦略”, classified as human, with a “completely generated” probability of 0.08 (in the median range for human posts in the sample). The post stood out from the surrounding content with political commentary, cultural context, native-language nuance, and more linguistic variability than the highly templated styles common elsewhere in the feed.

What does this say about the future?

“We all know that Moltbook is mostly AI-generated,” said GPTZero founder Edward Tian. “It’s just interesting that even a small amount of human-written content can have an outsized impact on engagement and perception, which makes the platform more socially complex than the viral framing suggests.”

While the platform may be AI-dominant, once humans make their way into the mix (as WIRED’s Reece Rogers shares his story of doing so), if they attract disproportionate engagement, it shows a few insights about AI-generated and mixed writing in general.

The first is that we can’t take format as the definitive signal of authorship. A post that upon first glance seems to be bland and polished and perhaps overly abstract may be human (not AI) while a post that “feels” human might actually be ChatGPT-generated.

The second is that there might be a confusion around Moltbook: people may think they are reacting to “the AI internet” when they have actually been catfished into discussions that have actually been structured by humans.

In other words, when it comes to the story that people are trying to tell about Moltbook, this dataset supports a distinction worth paying attention to. Sure, the platform might appear to be AI-dominant, but the posts that are generating a lot of the buzz could in fact be written by humans, who are using ChatGPT to sound like AI, but are actually humans… humans who are tapping into our deepest fears and hesitations around AI to generate headlines and virality. If that feels like an intellectual hall of mirrors, that could be the whole point.

Methodology and limitations

This was a snapshot analysis, and the dataset includes 134 posts scraped in a single crawl. Each post excerpt was analyzed using GPTZero’s AI detection model, which returned predicted class (AI / human / mixed), class probabilities, confidence score, and a “completely generated” probability. Comment totals were then aggregated by predicted class to compare engagement patterns.

This is a single scrape, as opposed to a longitudinal study, and AI detection is probabilistic, and not a definitive proof of authorship. Engagement can be skewed by a few unusually active threads. Still, despite all of that, what matters is that humans are on Moltbook too.